AI Is Rewriting Who Decides

Why decision-making and not productivity is becoming the real competitive edge

Before the main story, a few headlines worth your attention.

NemoClaw has signaled a shift beyond chips into the orchestration layer, positioning AI agents as enterprise infrastructure, not just tools. NemoClaw is Nvidia’s framework for building and managing agentic AI systems that can plan, act, and coordinate tasks across enterprise workflows. More importantly, it reframed the conversation from AI that answer questions to systems that take action, with security and control built in for real organizational deployment.

AI-native startups are growing rapidly with dramatically smaller teams, sometimes under 10 employees. This shift is redefining traditional scaling models and in many cases reducing dependency on large operational headcount to drive revenue. For enterprises, it signals rising competition from highly efficient, AI-leveraged challengers. Fortune has the story.

Walmart and Target are racing to embed their products into AI platforms like ChatGPT and Google Gemini, shifting from traditional e-commerce to “AI-native” shopping experiences. Instead of driving traffic to websites, both retailers are building tools that allow consumers to discover, compare, and purchase products in conversational AI environments. Adweek has the story.

AI education is mission-critical. Having just led AI training sessions for a large organization spanning three continents, I’ve seen firsthand that the appetite to learn is high. But the most valuable education goes far beyond tool training. It sits at the intersection of how to think about AI, which strategies and tools fit which tasks, and how to reimagine working in teams when intelligence and labor are no longer limited to humans alone.

The Shift to AI Decision Power

For years, we’ve been asking the wrong question about AI and work. We’ve been focused on how much faster it makes us, how many hours it saves, how much output it generates. Those are easy metrics to see and easy ones to report. But they are not where the real shift is happening.

The deeper change, the one that is quietly reshaping how organizations function, is about who is actually making decisions. AI is not just accelerating workflows. It is redistributing decision-making authority across the enterprise. And once authority begins to shift, everything else follows. What work looks like, how expertise is applied, how performance is evaluated, and ultimately where value is created all start to change in ways that are still not fully understood. Sometimes humans are driving decisions, sometimes AI is, and sometimes humans are simply s’witched in between.

The empirical evidence is now starting to line up around this idea. In large-scale studies of customer support, access to AI has been shown to increase productivity meaningfully, with the biggest gains accruing to less experienced workers. But what is more interesting is how those gains are actually achieved. AI embeds the judgment of top performers directly into frontline workflows, allowing less experienced employees to operate at a higher level. Decision-making capability, in effect, moves downward, closer to where the work is happening.

In consulting environments, the pattern is more conditional. AI improves both speed and quality within a defined frontier of capability, but performance drops when workers rely on it beyond that boundary. That creates a new requirement, not just to use AI, but to know when to trust it and when to override it. In writing and knowledge work more broadly, studies show that AI compresses performance differences across individuals, shifting effort away from first-pass creation toward editing, refinement, and judgment. Across all of these contexts, the common thread is that AI does not remove human decision-making. It redistributes it in a way that is dynamic, situational, and highly dependent on how people engage with the system.

AI does not remove human decision-making. It redistributes it in a way that is dynamic, situational, and highly dependent on how people engage with the system.

This is where the productivity conversation begins to break down. We tend to assume that better tools lead directly to better outcomes, that capability translates cleanly into performance. But a growing body of research suggests that this is not how AI works in practice. When you look across a wide range of human-AI experiments, the combined performance of humans and AI is often worse than the best human or the best AI working alone, particularly in decision-heavy tasks. The issue is not capability. It is actually coordination between the humans and the AI.

In real-world settings, even when algorithms improve prediction accuracy significantly, those gains do not always translate into better decisions because people override, ignore, or misinterpret the recommendations. Authority has not been recalibrated to match capability. In some cases, organizations under-delegate and fail to take advantage of what AI can do. In others, they over-delegate and erode the human judgment that is required when AI reaches its limits. Most sit somewhere in between, operating in a state of ambiguity where neither the human nor the system is clearly accountable. The result is that productivity becomes less about what the technology can do and more about how decision rights are structured, how escalation works, and how accountability is defined.

What this means for business leaders

The most important shift to internalize is that AI adoption is not primarily a technology decision. It is a decision-making design challenge. Leaders who treat it as a tooling upgrade will only see incremental gains. Those who treat it as a a decision-making authority redesign will see compounding ones.

First, decision rights need to be made explicit. It is no longer sufficient to layer AI onto existing workflows and expect performance to improve. Organizations need to define where AI should lead, where humans should lead, and where authority should shift dynamically based on context. Without that clarity, coordination breaks down and productivity gains remain inconsistent.

Second, judgment becomes the critical capability. As AI takes on more of the initial execution, the value of the human shifts toward knowing when to trust the system and when to challenge it. Training programs should reflect this shift, focusing less on tool usage and more on decision quality, edge cases, and critical thinking in AI-augmented environments.

Third, escalation and override mechanisms need to be designed, not assumed. High-performing organizations are not the ones that always follow AI recommendations. They are the ones that know when not to. That requires clear pathways for intervention and well-defined accountability when decisions move between human and machine.

Fourth, leaders should look for coordination failures, not just capability gaps. When AI is not delivering expected results, the issue is often not the model itself but how it is being used within the organization. Misaligned incentives, unclear authority, and poor workflow integration are far more common constraints than technical limitations.

Finally, productivity should be viewed as a system-level outcome. Evaluating AI at the level of individual tasks will miss the broader impact. The real gains emerge when decision-making authority, workflows, and incentives are aligned to reflect what AI can and cannot do well.

The organizations that move fastest here will not simply be more efficient. They will be structurally different in how decisions are made. And that, more than any single tool or model, is what will define advantage in the next phase of AI adoption.

Where I’ve been

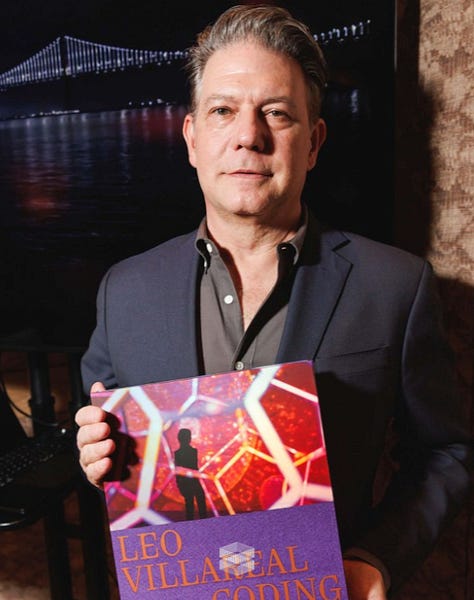

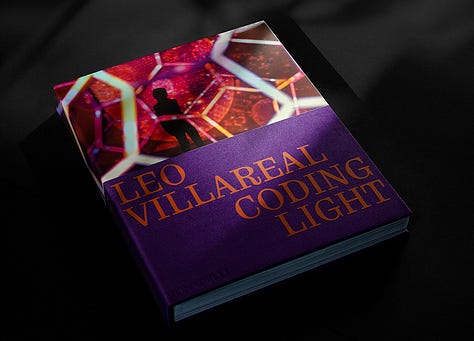

On Tuesday, I joined the Tishman Speyer team at Mission Rock here in San Francisco to celebrate the relaunch of the Bay Bridge lights with Leo Villareal, the lighting artist at the heart of the effort. Hearing him speak was inspiring, especially because of the fortitude, commitment, and courage that have defined his work over the past few decades.

What I’m reading

A Grim Truth Is Emerging in Employers’ AI Experiments (Futurism)

Google AI Overviews cut search clicks 42% (Search Engine Land)

Furniture brand OMHU’s AI Dog Ad (Ad Age)

Physical AI’s moment of acceleration (Deloitte Consulting)

What I’ve written lately

Fighting Cognitive Surrender (March 2025)

Who Remembers Wins (February 2025)

2025 AI Predictions: Identity & Agents (February 2025)

Claude Picked a Fight at the Super Bowl (February 2025)

AI Everywhere, Wisdom Nowhere (January 2025)

Shiv Singh is the CEO of Savvy Matters, which helps business teams translate AI disruption into practical business and marketing strategies, organizational design, executive-ready roadmaps, and bespoke education programs. He is also the Co-Founder of AI Trailblazers, a vibrant community uniting marketers, technologists, entrepreneurs, and venture capitalists at the forefront of AI.

A former two-time Chief Marketing & Customer Experience Officer and author of Marketing with AI for Dummies (4th print run, translated into five languages), Shiv built his career at LendingTree, Visa, PepsiCo, and The Expedia Group, and serves as a public-company board member of a Fortune 300 company and private investor.